Methodology

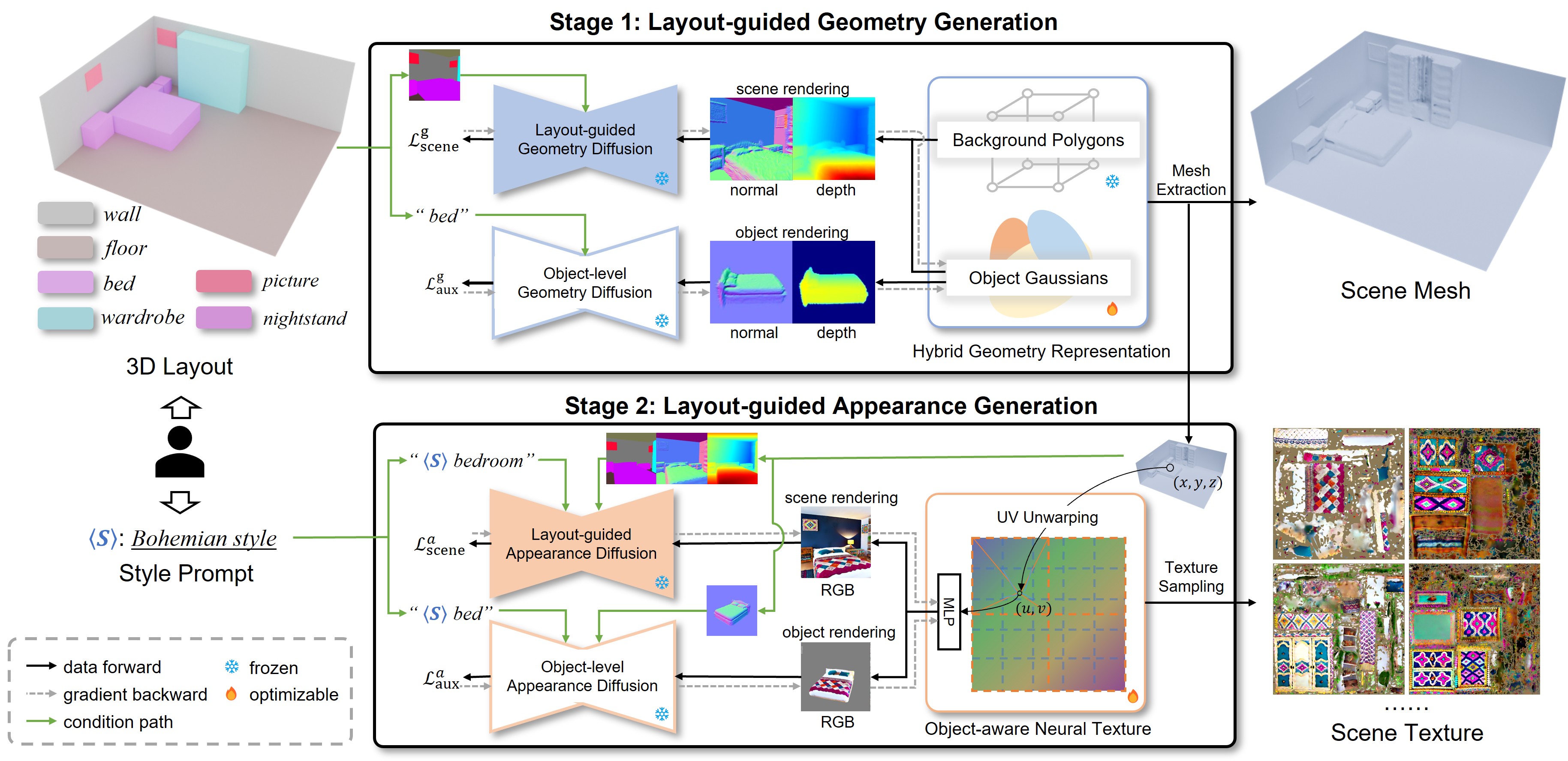

Our method generates scene meshes and textures from user-specified scene layouts and style prompts through a two-stage framework: (1) Layout-guided geometry generation: We adopt a hybrid scene representation where objects and background are modeled using 3D Gaussians and polygons, respectively. The representation is optimized with a layout-guided geometry diffusion model and an object-level geometry diffusion model. The final scene mesh is extracted from the optimized hybrid scene representation. (2) Layout-guided appearance generation: Given the generated scene mesh, we employ an object-aware neural texture to represent textures for all objects and the background. This representation is optimized via a layout-guided appearance diffusion model and an object-level appearance diffusion model. The optimized neural texture is then converted into high-quality texture maps for each object and background through a texture sampling process.